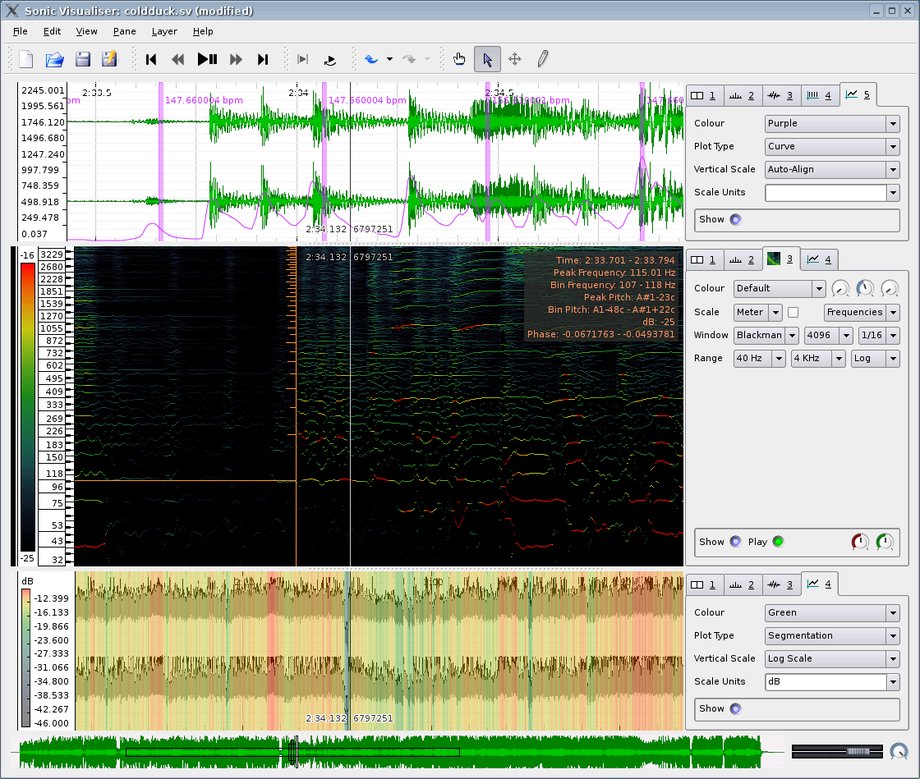

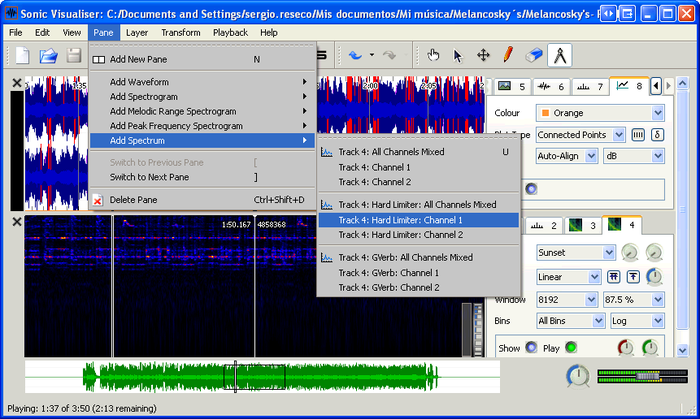

The outcomes include a growing library of prosodic examples with the goal of achieving an annotation convention for studying musical prosody in performed music.Īcoustic variations in music can be studied through the concept of musical prosody. The aim is to obtain reliable and coherent annotations that can be applied to theoretical and data-driven models of musical prosody. The end-to-end process of the data collection is described, from the providing of prosodic examples to the structuring and formatting of the annotation data for analysis, to techniques for preventing precision errors. Various annotation strategies are discussed and appraised: intuitive vs. These prosodic functions are marked by means of four annotation types-boundaries, regions, note groups, and comments-in the CosmoNote web-based citizen science platform, which presents the music signal or MIDI data and related acoustic features in information layers that can be toggled on and off. The method is applied primarily to the exploring of segmentation and prominence in performed solo piano music. In contrast, our top-down, human-centered method puts listener annotations of musical prosodic functions first, to analyze the connection between these functions, the underlying musical structures, and acoustic properties. In typical bottom-up approaches to studying musical prosody, acoustic properties in performed music and basic musical structures such as accents and phrases are mapped to prosodic functions, namely segmentation and prominence. To address this gap, we introduce a novel approach to capturing information about prosodic functions through a citizen science paradigm. However, few systematic and scalable studies exist on the function it serves or on effective tools to carry out such studies. It is also a good reference for mapping these drum kicks.Musical prosody is characterized by the acoustic variations that make music expressive. This is very useful if you are trying to detect BPM timing section of songs that have drum kicks. In this way the drum kicks is always quite visible even the song itself is quite "noisy".These two drum kicks should be 2 beats apart which means 60/188*2=0.638s. Let's focus on the lower part of the spectrum, which shows the drum kicks pattern(around 35Hz).This is showing the melodic range spectrogram of dimension tripper(Artist: nao). Using Sonic Visualizer to detect drum kicks Use the previous technique to define a BPM each note. You can see the notes are seperating more and more. This is the region that BPM is constantly changing. Try to find out the millisecond number of each note and calculate the corresponding BPM(beat-per-minute). Knowing which note is which sound, pulling out the timing is easy.First part forms a G#m7(G# - B - D# - F#) chord, or VIm7 chord. You can actually find individual notes if the song is "clean" enough. On the left there is a frequency axis which indicates the pitch. The dark blue light indicates the notes of this song. This shows the melodic range spectrogram of this song. Layer-> Add Melodic Range Spectrogram-> All channel mixed.It contains a multi-bpm part in the beginning. I'm using Yuki no Hana(Artist: Mika Nakashima).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed